A Step by Step Guide to Performing a Panda Audit

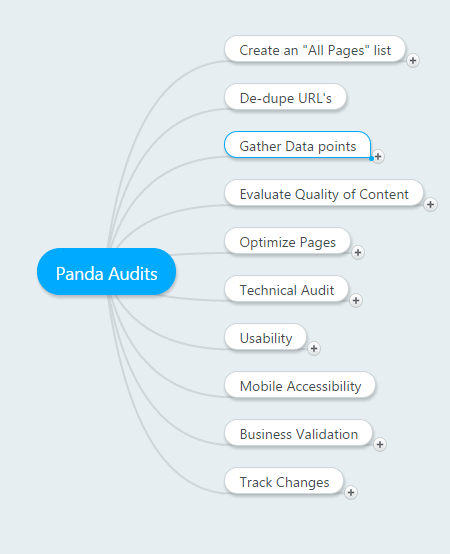

- 1. Introduction

- 2. Create an “All Pages” List

- 1. Site: Search

- 2. CMS Export

- 3. Webmaster Tools

- 4. Google Analytics

- 5. Screaming Frog

- 3. Remove Duplicate URL’s

- 4. Gather Page Metrics

- 1. URL profiler

- 2. Duplicate Content

- 3. Content Evaluation

- 5. Optimize Pages

- 1. Keyword Research

- 2. Optimiza On-Page Elements

- 3. Refresh/Add Content

- 6. Technical Audit

- 1. Google Webmaster Tools

- 2. Page Speed

- 3. Website Auditor

- 7. Tag Your Content

- 1. Schema.org

- 2. Open Graph Tags

- 3. Canonical Tags

- 4. Tracking Tags

- 8. Usability Audit

- 1. Reverse Engineer Your Site Structure

- 2. Add a Site Search

- 3. Add Breadcrumbs

- 4. Pagination

- 9. Mobile Optimization

- 10. Business Validation

- 11. Track Your Changes

Introduction

Panda is an algorithmic filter introduced by Google to evaluate the quality of content on a site. It assigns sites a Quality Score which is then used to modify the rankings in relation to relevancy of a keyword.

If your site is already penalized by Panda, this guide will help you recover your traffic and rankings. If you are looking to improve visibility, then a Panda Audit will help improve the relevancy of your domain & pages, and thus improve your rankings and traffic.

In this document we’ll discuss a step by step system to evaluate your site from different points of view. The goal of a Panda audit is to identify the parts of your site that might be generating a low quality score. Panda is known as a site wide modifier, so if your site has a low quality score, you may have powerful links and be the most relevant site on a topic, but the score may still suppress rankings for your pages.

The first step will be to gather data. We’ll go through the process of identifying all of your “real” pages and gathering various metrics for your pages. Next you’ll want to evaluate these pages to see which should be kept, updated, or removed. Once you have a roadmap of the pages you’d like to keep and drop, you can then perform a technical audit looking at observations from webmaster tools, Google Page Speed, and other technical aspects. As part of this stage you can also look at mobile access and business validation. Finally, you’ll want to look at every page you want to keep and optimize meta tags, navigational elements, and other on-page SEO factors.

Create an “All Pages” List

To begin the content audit process you first need to get a list of all of your pages. This step will be different for every site, based on the volume of pages and how the site was programmed. You’ll want to create a spreadsheet where you add a tab for each of the tools below.

Site: Search

You can start by searching on Google for pages that Google has indexed for your site by searching site:domain.com Don’t forget to use both variations – site:www.domain.com and site:domain.com Unfortunately I do not know of any tools that will automatically scrape Google to create a URL list, so you may have to do this by hand.

CMS Export

If you’re using a CMS system like WordPress, Joomla or Magento, you can get an export of all of the pages of your site. You’ll want the tool to export ALL Url’s, including category pages, product pages, etc

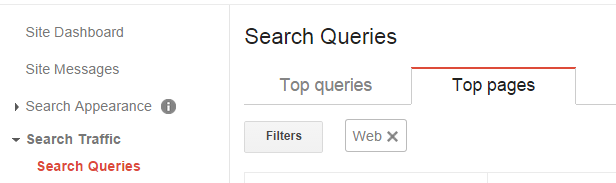

Webmaster Tools

Google webmaster tools allows you to download a list of top pages that they’ve indexed for your site. You simply navigate to Search Traffic > Search Queries > Top Pages and you can download the table with the URL’s including additional information such as Impressions, Clicks, CTR and Avg Position.

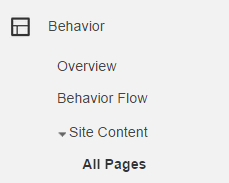

Google Analytics

As with Webmaster Tools, Google Analytics also has the ability to create a report with all of the pages of your site. You can go to Behavior > Site Content > All Pages. Here you’ll see a list of all of the pages on your site that have a least 1 page view.

Export the results, making sure you get ALL of them from Analytics.

Screaming Frog

Using screaming frog, you can scrape your site the way Google would, following link after link to spider and capture as many pages on your site as possible. This is a desktop tool that may take a long time to run, but it’s definitely worth using, as it may uncover additional pages that the other tools didn’t turn up.

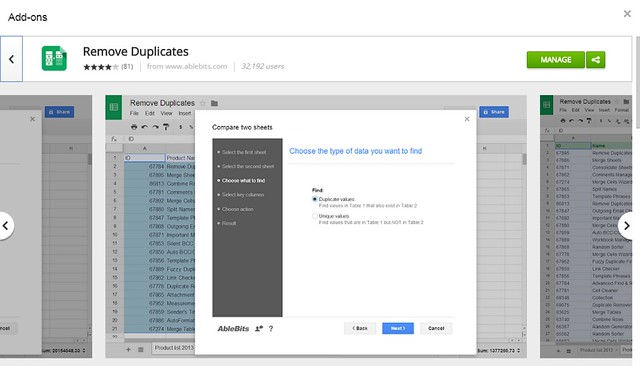

Remove Duplicate URL’s

In the first part, your goal was to find every single URL available and add them all to a spreadsheet. Next, you’ll want to copy and paste ONLY the URL’s and use a tool to remove duplicates.

Google Drive has an add-on called “Remove Duplicates” that you can install. Scrapebox also has a tool that allows you to add a list of URL’s and remove duplicates. Finally, you can use Excel’s built-in duplicate remover.

This part is important because, in the next step, you’ll want to gather information about these URL’s and you don’t want to accidentally duplicate any of those URL’s.

Gather Page Metrics

Using your list of URL’s, you’ll want to use a few tools to gather as much data as possible about these URL’s.

URL Profiler

This is a relatively new tool that recently burst into the scene, and is absolutely handy when performing Panda audits. You can use it to automatically gather high-level information about your URL’s. URL Profiler is user-friendly with detailed instructions on the site for how to use it, so I won’t go into specifics here. I will, however, list some of the metrics you can consider grabbing when running a crawl:

- 1. Majestic

- 2. Mozscape

- 3. Ahrefs

- 4. Pagerank

- 5. HTTP Status

- 6. PageSpeed

- 7. Google Analytics

- 8. Indexed in Google

- 9. Google Last Cached

- 10. Readability

- 11. Copyscape

You’ll get an export with over 50 metrics that will prove invaluable when analyzing your site. If you connect URL Profiler with API’s to your SEO Tools and Analytics, the data gathering will take some time but will be seamless. You’ll have metrics such as word count, reading time, Dale-Chall readability score, multimedia content on the page, and more.

In terms of link metrics to be used when evaluating your pages, URLProfiler can pull in data including Domain Authority, Page Authority, Mozrank, Trustflow, Citationflow, Referring Domains, etc.

An advantage of using this tool is you can automatically pull in data from Google Analytics which will help you have statistical information about the user engagement metrics of your pages.

Unfortunately, the Webmaster Tools API does not currently allow users to export top pages via their API. The data from WMT would be extremely valuable here. Having an intern or assistant manually add columns for WMT data will help you tremendously.

Duplicate Content

Duplicate Content is one of the top culprits webmasters reference when describing problems with Panda. Duplicating your own pages, or having others crawl and create duplicates of your pages across the web, leads to many problems and it’s very important to address these problems in your audit.

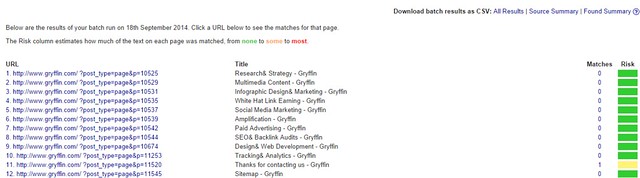

URLprofiler has a feature that will add a link to the copyscape profile of a page that has duplicates. Generally, I prefer to create a separate report directly from Copyscape as the report is easier to read/interpret, and you can run a batch search on copyscape and export your results to CSV:. I’ll then do a separate “Copyscape DeDuping” aspect to the audit, going through every one of the alerts and taking care of them independently. But from the analytic point of view, having duplicate content available on the URLprofiler export can facilitate future content evaluation.

For the initial report, we’ll use the URLProfiler data that is included with all the other relevant metrics.

Content Evaluation

Now that you have a spreadsheet in front of you with a large amount of data, you can start going through and marking what pages you want to:

- Keep As-Is

- Keep and Refresh Content

- Keep and Add More Content

- 301 Redirect to a new, more relevant page

- 404 the page and remove from Google’s Index

You can add 5 columns to the left of your URL, keeping it frozen, so you can mark what action you’d like to perform for each url.

Sort by Pageviews, low to high

Here you’ll get your domains listed by URL’s that have had little to no traffic during the time frame used when you set up your Analytics connection with URL Profiler.

Sorting by Pageviews, low to high, will show you low-value pages on your site that aren’t getting any traffic.

Before you decide to get rid of these pages, look at the Page Authority and Mozrank to see if perhaps it’s an issue of keyword optimization. They may have high Mozrank but are not ranking well because the target keyword is too competitive or because it’s too long-tail and lacks a clear focal point.

Then, you should look at word count. Perhaps these pages simply don’t have enough content to justify keeping, especially if they’re combined with low page authority and Mozrank. Other metrics to consider are Juice links and Ahrefs backlinks.

Sort by Avg Time on Page, high to low

Look at pages where users actually stay on the page for more than 30 seconds. These pages are engaging visitors, so you may want to keep them.

Google definitely uses engagement metrics, such as: bounce rate, avg time on page, and pages / visit to measure user engagement with your site.

Sort by Word Count, low to high

This view will show you the pages that contain little content. Pages that have less than 300 words should be marked to either redirect, remove, or add content. In general you want to make sure your pages all have at least 300 words, while focusing on creating pages that are much more comprehensive and in-depth.

Some of the latest studies show that long form content, including articles with over 2,000 words, perform better in general. Preliminary research conducted by the team at Gryffin showed pages performing well in the SERP’s with few inbound links and low domain authority have very high word count and readability scores.

Don’t throw the baby out with the bath water!

You have to be very cautious when performing an audit,look at the Page Authority and Mozrank to see if perhaps it’s an issue of keyword optimization. They may have high Mozrank but are not ranking well because the target keyword is too competitive or because it’s too long-tail and lacks a clear focal point.

Perhaps the reason the page doesn’t have much traffic is because it’s not well linked by other pages.

Perhaps the page wasn’t optimized well and doesn’t have keywords in the title or description.

Generally, pages that you’ll want to throw out are pages that have low word count and low metrics across the board. These pages may simply not be worth the effort of trying to keep. Instead, take those down and remove them from the Google index. You can delete them from the site and then go to Webmaster Tools and add them to the “Remove” tool.

Pages that have good statistics can be saved by proper optimization and by adding or refreshing content.

It’s a judgement call – one that you have to make carefully and armed with as much information as possible about every URL.

Optimize Pages

With a master list of domains that you want to keep, remove, and optimize, now comes the heavy lifting – actually optimizing your pages. At this stage, you’ll want to make sure every single one of the pages that you are keeping is set up to maximize traffic. We recommend for this section to be done in stages:

1. Keyword Research

If you don’t already have a list of target keywords for your site, this will be important for you to have available first and foremost. Keywords will be used throughout the on-page optimization and you’ll need to be prepared to A. do it concurrently or B. do it beforehand and matching the best keyword with the best article.

If you’re not sure how to do keyword research, we’ve created an in-depth step by step tutorial for keyword research and competitive analysis. All you have to do is follow this guide and you’ll be a Keyword Research Guru in no time.

2. Optimize On-Page Elements

Whether you do this directly on WordPress, or first on a spreadsheet. and then update on WordPress or your CMS, is a matter of preference. Personally, I prefer to optimize on a spreadsheet in case I want to make changes as I go down my list and optimize. It’s not rare to find various pages that are relevant to the same keyword and decide to change the focus keyword or title. It would be a beast to have to do this directly on the CMS, versus simply going through the whole process first on a spreadsheet, and then making the changes on WordPress after this part is finished.

If you’d like to use a spreadsheet, here’s our On-Page Optimization Spreadsheet Template for you to use as a guide. Simply fill this out with as many details as possible, constantly referencing your Keyword Research Spreadsheet to make sure that every single page is optimized for the optimal terms.

3. Refresh/Add Content

At Gryffin, we’ll often leave this step for last, as it’s by far the most time consuming. We generally start on this AFTER we’ve made all the other fixes/changes. This starts the recovery/improvement process sooner so you don’t have to wait months for all the new content to be ready, without seeing results.

For this final stage, we go back to all of the pages that have low word count and add content to make them longer.

We refresh old pages and add updated information so that they “become” new. The world changes so quickly that it’s often more simple to add more content reflecting new images, videos or news that have appeared on a subjects. We read through the content to see what information is no longer relevant and add evergreen updates.

If there are keyword opportunities that don’t have content currently addressing them, we’ll build new, in-depth content around those keyword opportunities, following all of the “Best Practices” detailed here. Wherever possible, new content would include multi-media to support it, including images and videos.

As you can imagine, this can be a costly, long term project. However, if you want to stay in the Google Organic game, this is something you need to consider as “Quality Content” has finally ascended to the throne of the SEO realm.

Technical Audit

Now that we have a list of pages that we want to remove and keep and have at least optimized the on-page SEO elements, we can move on to our technical audit. This audit will require the use of a variety of tools so that you can take a comprehensive look at the technical elements of your site.

Google Webmaster Tools

Using Webmaster tools, you can get reports directly from Google about ways to improve your site. From crawl errors to page statistics, you can use the data to find ways of improving your site.

In our Webmaster Tools Audit Protocol we take you through a step by step process of using Webmaster Tools as the sole basis of a site audit. You’ll see that we basically just go down all of the links available to webmasters in the interface, looking for errors and ways of improving the site.

The best way to create an actionable list of items to be worked on by your development team is to export the relevant reports in Webmaster Tools and add them to a sheet. In this spreadsheet, you can see the relevant data that was exported during our audit of Gryffin.com, and in the overview, a list of actions required. It also contains a column for “Assigned To”, “Status” and “Date” so we can evaluate the development of the project.

From a technical standpoint, by going through webmaster tools, you’ll have a chance to fix items like structured data errors, pages with short meta descriptions, crawl errors, pages that don’t fetch correctly as Google, and more.

You’ll also be able to see your current robots.txt file, exclusions, and uploaded sitemaps.

There’s also additional data that you can work on such as improving interlinking between pages that have few inbound links as well as looking at your target words and creating more content with the words you’re trying to rank for.

Page Speed

Load time has become increasingly important as bandwidth improves and people become more impatient about waiting for content to load. As a result, optimizing your site for page speed has become a fundamental aspect of SEO.

Using Google’s PageSpeed Insights tool, you should start with your high value pages to create a list of items that need to be fixed. Google will give you a list of suggestions for both mobile and desktop. If you are using a templated site, such as a WordPress theme, you may only need to run a few pages and fix the code at the theme level, thus automatically optimizing the site as a whole.

Some of Google’s suggestions may include:

- Enable compressions

- Optimize images

- Leverage browser caching

- Minify CSS

- Minify Javascript

- Minify HTML

- Eliminate render-blocking Javascript and CSS in above-the-fold content

- Reduce server response time

Make sure you follow both the Mobile and Desktop suggestions. We’ll get into Mobile Optimization in a moment.

Website Auditor

There are other on-page SEO tools, but this one is one of our favorites for 2 reasons: 1. it’s cost-effective and 2. it’s comprehensive. At only $124.75 / year, most webmasters can afford access to this tool as part of their audits. It contains much of the same information that other more expensive tools offer, with a great, easy-to-use interface.

The Website Auditor dashboard will crawl all of your pages and look for elements that could be improved. The main categories are:

- Indexing and crawlability

- Pages with 404 status code

- txt file

- .xml site map

- Redirects

- www and nonwww redirect set up

- Pages with 302 or 301 redirects

- Pages with meta refresh tags

- Pages with rel=”canonical”

- Encoding and Technical Factors

- Pages with duplicate rel=”canonical” code

- Pages with Frames

- Pages with W3C errors and warnings

- Slow loading, “heavy” pages

- URL’s

- Dynamic URL’s

- URL’s that are too long

- Links

- Broken Links

- Pages with excessive number of links

- On-Page

- Empty title tags

- Duplicate titles

- Titles that are too long

- Empty Meta Descriptions

- Duplicate Meta Descriptions

- Meta Descriptions that are Too Long

Website Auditor also has an interesting report called “Content Analysis” where you can choose a URL, enter your target keyword, and see how your page is optimized for that keyword. It’ll give you the Total Density for the keyword, including the Title, Body, Description, H1, Links and Bold density for each keyword. To perform this type of in-depth analysis may not be feasible for large sites, but you can consider this level of granularity for your top-level money terms and SEO landing pages.

To turn all of this information into actionable steps, you’ll first want to export all of the different reports into a spreadsheet, like this one: Website Auditor Optimizations Checklist.

Once you’ve figured out all of the items that need to be reviewed on your checklist, you can add them to the “overview” tab as actionable items.

Tag your Content

Google has expressed support of microdata tags, stating that it helps them to better understand content on websites. In a recent Webmaster Hangout, John Mueller from Google stated, “When you clearly mark up what you’re talking about, then that makes it easier for us to say, Oh, this page really is about this topic, because they confirmed this with the schema.org markup or with other kind of metadata markup so that we could really be sure that this page is about this topic.”

Schema.org

Schema markup is code that you add to your website, within your existing HTML elements, to explain what different part of your code actually MEANS. Joining collaboratively to create this project, search engines Google, Yahoo and Bing created the relevant tags that belong to the schema.org project. When search engines themselves offer us tags to help THEM better understand and rank YOUR content, it’s worth paying attention!

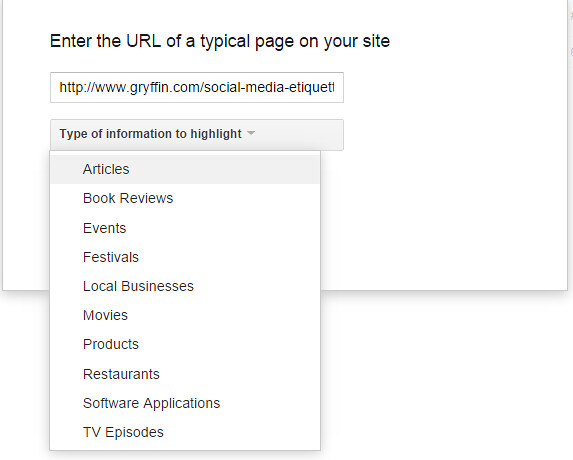

When looking at schema.org markup tags, you have to be aware that there are hundreds of them for you to choose from, depending on the type of content available on your site. This page will explain the basics of schema.org, but for the purposes of a Panda Audit, all we need is what’s provided within Google Webmaster Tools: Data Highlighter.

On the top right, click on “Start Highlighting”. Then enter the URL of one of the pages of your site and click on, “Tag this page and others like it”.

These are some of the types of markup tags available:

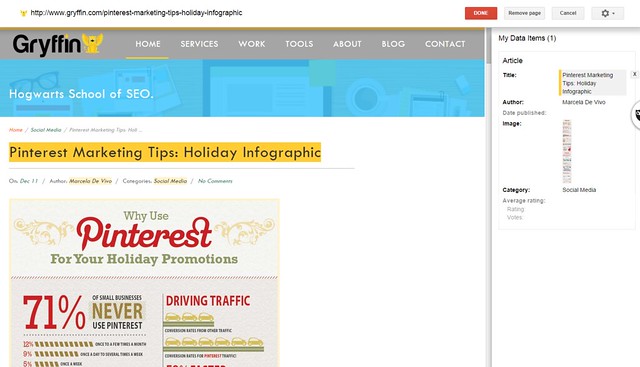

Once you select the type of markup you need, you can start highlighting for each of the relevant schema types:

Here you can see that, within the articles markup area, we can highlight and tag the Title, Author, Date Published, Image, Category and ratings.

After marking up a few pages, Google will understand that the rest of your pages follow the same template, and will apply the tags accordingly.

If you would like the markup to live on your site, you can choose to implement schema.org markup directly at the theme level. This article will walk you through the process if you are using WordPress. You may also choose to use a Plugin such as All In One Schema.org Rich Snippets or Schema Creator by Raven. If you are using a different CMS or ecommerce platform, you may want to research the best way to add these tags directly so they’re built into the code of your site.

Open Graph Tags

According to ogp.me, “The Open Graph protocol enables any web page to become a rich object in a social graph.”

Now this is not necessarily a factor that impacts Google organic, but it does affect social sharing which in turn impacts organic. Content that receives a social buzz will generate the user engagement and links that help it perform better in Google organic.

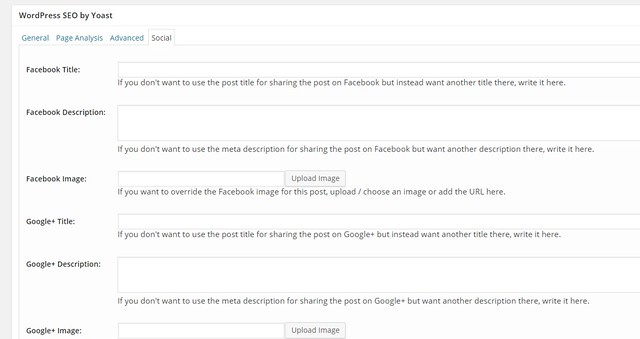

Open graph tags optimize how titles, descriptions and images appear in social streams. If you use WordPress, and have installed the Yoast SEO plugin, then the markup will already be built in to your WordPress pages. All you have to do is click on the “Social” part of your Yoast optimization area, and add the required information.

If you do not use WordPress, Moz has a great resource for you, which contains all of the tags you need to add to your pages.

Make sure you have the functionality set up to optimize your pages with open graph tags, as you can then perform these updates when you go through the Page optimization phase to follow.

Canonical Tags

These tags appear on the HTML header of a web page, in the same area where you have the Title and Description attributes.

If you have pages on your site that have multiple attributes, all in essence belonging to the same URL’s, you should use the canonical tags to tell Google that those pages should credit all inbound links and authority to the canonical URL.

This is the tag that should appear in the header:

<link rel=”canonical” href=”http://gryffin.co/blog” />

As you audit your pages, look for instances where pages should be grouped in canonical sets, and add the canonical tag so the engines know which page should be credited.

It’s ideal to always add a canonical tag on a page to itself, so if accidental duplicates are created, the root page always gets proper credit.

Tracking Tags

If you are promoting your site through Facebook or Adwords, you may have multiple tags on various pages of your site. From your Analytics to your conversion codes, to Facebook remarketing and conversion tracking, you’ll want to consolidate your tags as much as possible.

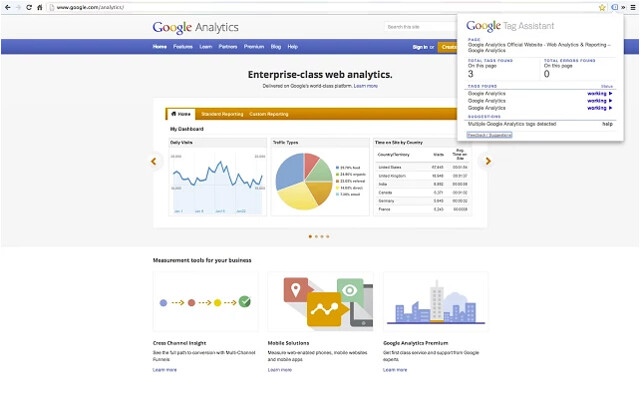

Consider using Google’s Chrome Extension called Tag Assistant to check your pages:

If do have multiple tags, consider using Google’s tag manager to consolidate all of your Google tracking and conversion codes.

Usability Audit

We’ve briefly touched about the importance of user engagement metrics, now we’ll discuss how to incorporate these into your audit.

Google is highly focused on user experience beyond simply ordering results based on relevance. Providing relevant results isn’t enough. They are striving beyond relevancy to guarantee a positive user experience. If they send you free traffic, you need to make sure you maximize that traffic so they keep sending more your way.

You need to make sure your site is optimized for usability to make sure your user engagement metrics reflect this. We’ve already included Google Analytics data into our evaluation of individual pages, but at this stage, it may be helpful to revisit this in order to gain an overall sense of how users are interacting with your site. Here’s a great report you can import into your Google analytics account.

Reverse Engineer your Site Structure

To start, use a mindmapping tool to reverse engineer your site navigation. Look at the different levels and groupings of pages, and determine whether your site architecture makes sense.

Here’s an example of a mindmap based on site structure:

Depending on the size of your site, you may not be able to drill down to individual pages. If you can’t, make sure you have a cohesive map/strategy for your main category and high level pages.

Orphan pages are a huge waste of potential, so in this section of your audit, you want to “tighten up” your site infrastructure to make sure all of your pages, at every level, have enough links to support it. Using pagination, HTML site maps, and a “related pages” plugin can help improve the internal connectivity of your pages.

Add a Site Search

If you don’t already have the ability for users to search your site, consider adding a site search engine. This will help users to quickly/easily find additional content on your site, thus increasing their Time on Site and Pages Visited, while reducing Bounce Rate.

Add Breadcrumbs

In Google’s Search Engine Optimization Starter Guide, they discuss how breadcrumbs can help with SEO and user experience. If you’re using a CMS like WordPress, activating breadcrumbs should be a quick and easy fix.

In the same guide, Google mentions the importance of using keyword optimized anchor text for INTERNAL links. This is a deprecated tactic for link building, but when it comes to linking to other pages of your sites, this can still help Google understand what your pages are about.

If your pages are well optimized with your keyword in your meta title, your breadcrumbs will be automatically optimized.

Pagination

If you’re not already, make sure you are using pagination to help get deep content indexed and to send links to internal pages.

Mobile Optimization

In recent conferences, hangouts, and other appearances by Google employees, the reality of the shift to mobile searching is being clearly expressed. Mobile searches are increasing by the minute, making mobile optimization a fundamental aspect of SEO.

As part of your mobile optimization audit, you’ll want to evaluate how your site shows up in mobile. Google recommends responsive design, but many sites choose to use mobile specific websites.

The key elements of your mobile audit include:

- Is your website mobile friendly?

- Mobile site

- Are your redirects working properly?

- Do you allow users access to the Desktop version?

- Are you using canonical tags on your mobile pages?

- Did you upload a mobile XML sitemap?

- Responsive

- How does your site look on ALL devices, including mobile devices and tablets?

- Are links easy to click?

- Can users click to call directly from your site?

- Are you using a viewport tag?

- Page Load Times

- How quickly does your page load on mobile?

- Mobile site

In our technical audit, we reviewed PageSpeed Insights, which contains a section about mobile. If you already implemented those fixes, it’s possible that many of the page speed related issues relevant to mobile will have already been taken care of.

Business Validation

Google has declared all-out war against Black Hat SEO, link buyers, burn and churn optimization practices, etc. What do most of these have in common? They create fly-by-night domains that are not directly associated with a legitimate company.

How do you create legitimacy in Google’s eye for your brands, and establish trust signals? By adding business validation markers to your domain. Some of these include:

- About Us page

- Contact Numbers (clickable from mobile)

- Physical Address

- Terms of Service

- Privacy Policy

- Testimonials

- Social Profiles

- Industry Associations

- Secure Banners

Make sure the content on your terms of service and your privacy policy pages is unique and not copied over from other sites.

In addition, mentioning associations you are a member of, such as the Better Business Bureau and your local Chamber of Commerce, can indicate trust.

Track your Changes!

Having completed your technical audit and implemented your changes, now you’ll want to make sure you are carefully following how your site reacts to these changes.

Some KPI’s for your to track include:

- Keyword rankings

- Page Depth

- Bounce Rate

- CTR in WMT

- Pages / Session

- Goal Completions

- Avg Time on Site

- Comments

- Social Shares

- Sales

You can annotate your changes in Analytics so you can look for trends and responses in terms of traffic and referrals.

You’ll want to keep a close eye on these metrics to determine if the changes made to the site were enough to create an improvement in traffic, and rankings.

Yes, a Panda Audit REALLY IS Worth It!

We know – this is a lot of work. Businesses wanting to continue receiving traffic from Google Organic need to take Panda seriously. As competition increases for traffic, Google becomes increasingly demanding. By performing a Panda Audit, you improve your chances of recovering from a penalty while increasing your ability to rank well in the future.

Latest posts by Marcela De Vivo (see all)

- The Evolution of Data: Creating Intent-Led Digital Strategies - 29 January, 2019

- Productive Things To Do When You Are a Freelancer Job-Hunting - 18 July, 2018

- What KPIs Should I be Using to Measure my SEO Campaign - 21 July, 2017